A prosthetic eye to treat blindness

503,121 views |

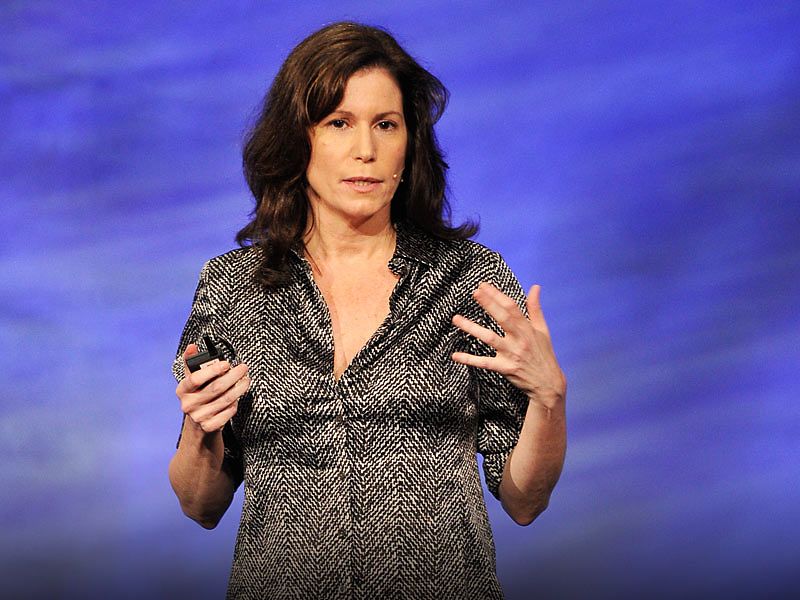

Sheila Nirenberg |

TEDMED 2011

• October 2011

At TEDMED, Sheila Nirenberg shows a bold way to create sight in people with certain kinds of blindness: by hooking into the optic nerve and sending signals from a camera direct to the brain.